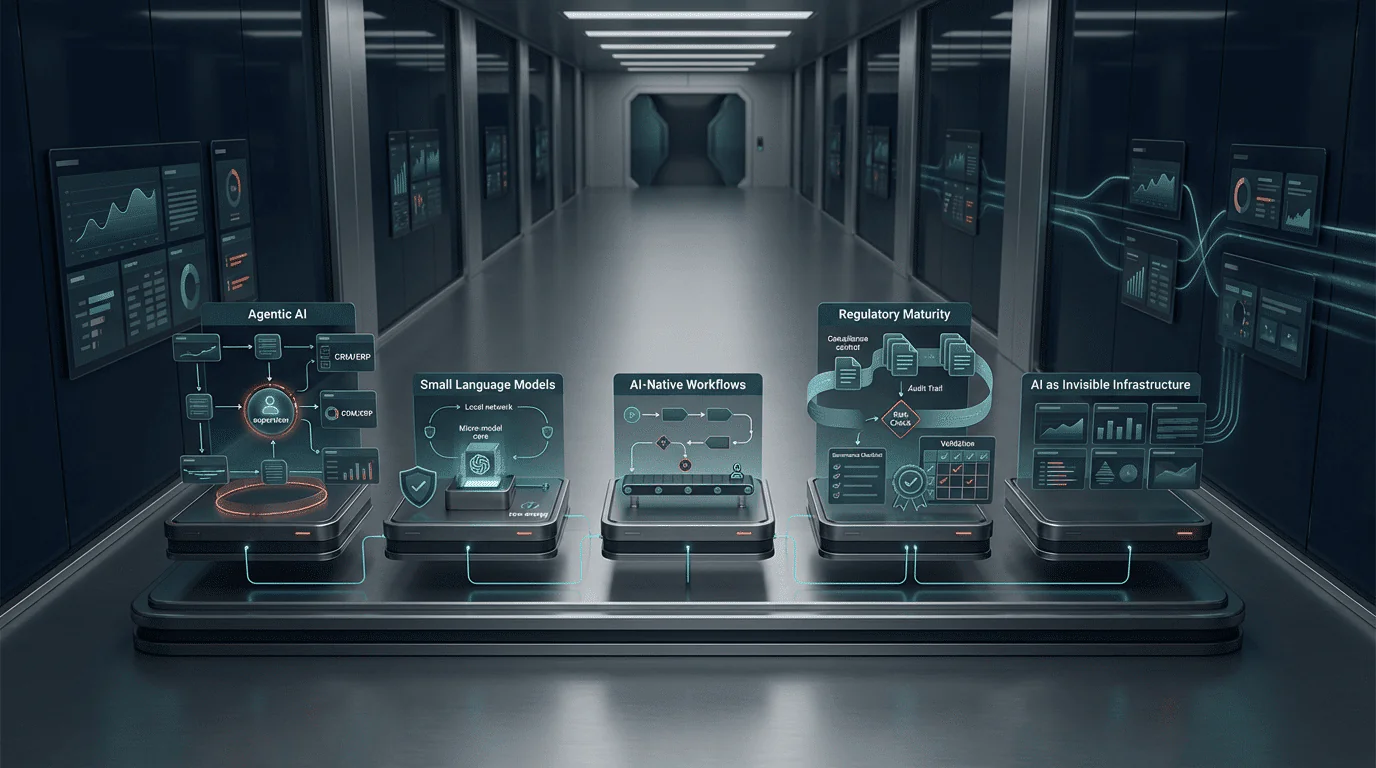

The next wave of custom AI will be defined by agentic AI systems that learn, adapt, and act autonomously within defined boundaries — shifting the value proposition from “AI that answers questions” to “AI that completes workflows end-to-end.” Five converging trends — agentic AI, small language models, AI-native workflows, regulatory maturity, and AI as invisible infrastructure — will determine which organisations extract lasting value from their AI investments and which remain stuck in perpetual pilot mode.

The Landscape Is Shifting — And the Window Is Narrowing

2026 is not the year to wait and see. It is the year where early architectural decisions will determine which organisations successfully scale AI systems and which get stuck in perpetual pilot purgatory.

The first decade of enterprise AI (2015–2025) was defined by experimentation. Organisations built pilots, tested proofs of concept, and explored what AI could do. The results, as documented throughout this series, were sobering: RAND Corporation found that over 80% of AI projects failed, BCG reported that 74% of companies struggled to extract value, and S&P Global found that 42% of companies abandoned most of their AI initiatives in 2025. The experimentation phase is over. The next phase — 2026 to 2030 — will separate the organisations that build AI into their operational DNA from those that continue treating AI as a bolt-on technology experiment.

The five trends described below are not speculative predictions. They are developments already underway, supported by market data, regulatory timelines, and technology maturity indicators. For mid-market companies in the Benelux region, understanding these trends is not about chasing the next technology cycle. It is about making investment decisions today that will still be delivering value in 2028 and beyond.

Trend 1: Agentic AI — From Answering Questions to Completing Workflows

What Is Changing

The most significant shift in enterprise AI between 2026 and 2030 is the transition from AI systems that respond to prompts to AI systems that autonomously execute multi-step workflows. This is the shift from “assistant AI” to “agentic AI.” An assistant AI answers your question. An agentic AI understands your goal, plans the steps required to achieve it, executes those steps across multiple systems, evaluates the results, and adjusts its approach — all without requiring human intervention at each step.

Gartner predicts that 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in 2025. This is not a gradual evolution — it is an 8× increase in a single year. In Gartner’s best-case scenario, agentic AI could drive approximately 30% of enterprise application software revenue by 2035, surpassing $450 billion. The AI agents market itself is projected to grow from $7.84 billion in 2025 to $52.62 billion by 2030, a compound annual growth rate of 46.3%.

What It Means for Custom AI

For organisations building custom AI solutions, the agentic shift changes the design paradigm fundamentally. Current custom AI systems typically handle single tasks: a demand forecasting model predicts next month’s sales, a document classifier categorises incoming mail, a chatbot answers customer questions. Agentic AI systems chain these capabilities together: an agent receives a customer complaint, analyses the order history, identifies the root cause, generates a resolution proposal, checks available inventory for a replacement, drafts the customer communication, and escalates to a human only when the case falls outside its decision boundaries.

This shift requires a new architectural approach. Instead of building isolated AI models, organisations need to build orchestrated systems where multiple specialised agents collaborate. Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025, signalling that enterprises are actively designing these systems. The architecture mirrors how effective human teams operate: a researcher agent gathers information, an analyst agent validates results, an executor agent takes action, and a supervisor agent monitors quality.

The Critical Nuance

Gartner simultaneously predicts that over 40% of agentic AI projects will be cancelled by the end of 2027, due to escalating costs, unclear business value, or inadequate risk controls. The lesson from Section 9 applies directly: agentic AI projects fail for the same governance reasons that any AI project fails — starting with technology instead of a business problem, underinvesting in data readiness, and scaling before validating ROI. The technology is maturing. The governance discipline required to deploy it successfully has not changed.

For Benelux mid-market companies, the practical implication is this: the custom AI systems you build today should be designed with agentic expansion in mind. A demand forecasting model built as an isolated prediction engine has a fixed value ceiling. The same model built as a component that can be orchestrated with inventory management, supplier communication, and purchasing agents has a value trajectory that compounds over time.

Trend 2: Small Language Models — Efficient, Domain-Specific, Privacy-Preserving

What Is Changing

The “bigger is better” era of AI development is giving way to a more nuanced reality. While frontier models (GPT-5, Gemini 2.0, Claude) continue to grow in capability, the most significant business impact for mid-market companies is increasingly driven by Small Language Models (SLMs) — compact, efficient models designed to run on local infrastructure rather than in massive cloud data centres.

SLMs represent a fundamental shift in the cost-performance equation: serving a 7-billion parameter SLM is 10–30× cheaper than running a 70–175 billion parameter LLM, cutting GPU, cloud, and energy expenses by up to 75%. For companies currently facing monthly cloud AI bills of €5,000–€50,000, the economic case for migrating high-volume workloads to domain-specific SLMs is increasingly compelling.

Why This Matters for the Benelux Mid-Market

Three characteristics of SLMs align directly with Benelux mid-market requirements:

Privacy and data sovereignty. In an era of AVG/GDPR enforcement and heightened awareness of data sovereignty, the ability to deploy AI models on-premise or on-device is strategically valuable. SLMs enable organisations to process sensitive data — customer records, financial transactions, legal documents, medical information — within their own secure infrastructure. The data never leaves the organisation’s network, eliminating the transmission risks associated with external API calls to cloud-based LLMs. For sectors subject to DNB/AFM financial regulation, IGJ healthcare oversight, or legal privilege requirements, this is not a convenience — it is a compliance necessity.

Domain-specific accuracy. A fine-tuned 7B legal SLM can achieve 94% accuracy on contract analysis versus 87% for a general-purpose frontier model. This counterintuitive result — smaller model outperforming larger model — occurs because the SLM is trained specifically on the domain’s terminology, patterns, and requirements. For mid-market companies with specialised operational contexts (logistics, manufacturing, professional services), a domain-specific SLM trained on their actual data outperforms a general-purpose giant that knows everything about everything but nothing specific about their business.

Cost efficiency at scale. SLMs can run on standard server hardware, consumer GPUs, or even edge devices — eliminating the expensive cloud infrastructure that makes large-model AI prohibitively expensive for many mid-market organisations. The hybrid approach is emerging as the standard: SLMs handle high-volume, domain-specific tasks (document processing, classification, routine predictions), while frontier LLMs are reserved for complex reasoning tasks that require broad knowledge. This hybrid architecture optimises both cost and capability.

The Practical Implication

Custom AI projects should evaluate SLMs as the default starting point for domain-specific applications. The methodology described in Section 5 (Data-to-Done) applies identically: the technology choice (SLM vs. LLM) is determined by the business problem, not by the technology’s marketing. For high-volume, privacy-sensitive, domain-specific applications — which describes the majority of Benelux mid-market AI use cases — SLMs increasingly represent the optimal technology choice.

Trend 3: AI-Native Workflows — Processes Designed Around AI, Not Retrofitted

What Is Changing

The first wave of enterprise AI (2018–2025) was characterised by retrofitting: taking existing business processes and inserting AI into them. A manual quality inspection process was augmented with computer vision. A spreadsheet-based demand forecasting process was enhanced with a machine learning model. The existing workflow remained intact; AI was bolted on as an improvement.

McKinsey’s 2025 AI survey found that organisations reporting significant financial returns from AI are twice as likely to have redesigned end-to-end workflows before selecting modelling techniques. This is the defining insight of the AI-native workflow trend: the highest-value AI implementations do not improve existing processes — they redesign processes from the ground up with AI capabilities as a core design assumption.

What AI-Native Workflows Look Like

An AI-retrofitted accounts payable process: invoices arrive by email, a human sorts them, enters data into the ERP, matches to purchase orders, flags discrepancies, routes for approval, processes payment. AI is added at one step — perhaps OCR to extract invoice data — but the overall workflow remains human-driven with AI assistance.

An AI-native accounts payable process: invoices arrive through any channel and are automatically classified, extracted, validated against purchase orders, matched to contracts, checked for pricing discrepancies, routed for approval only when the AI system identifies exceptions that fall outside its confidence threshold, and processed for payment. The human role shifts from processing to exception handling and oversight. The workflow is designed around what AI can do reliably, with human intervention structured around what AI cannot yet do.

The difference is not incremental improvement — it is structural transformation. AI-retrofitted processes typically deliver 10–25% efficiency gains. AI-native processes, designed from scratch around AI capabilities, can deliver 50–80% efficiency gains because they eliminate entire process steps rather than accelerating individual steps.

The Mid-Market Advantage

Counterintuitively, mid-market companies have a structural advantage in AI-native workflow design. Large enterprises have decades of embedded processes, complex organisational politics around process change, and legacy systems that resist redesign. A mid-market company with 50–500 employees can redesign a core business process in weeks rather than months, with fewer stakeholders to align and less legacy infrastructure to accommodate. The crawl-walk-run approach from Section 5 enables this: start by automating a single workflow end-to-end (Tier 1), then connect automated workflows across departments (Tier 2), then embed AI into the organisation’s operational architecture (Tier 3).

Trend 4: Regulatory Maturity — The EU AI Act Moves from Theory to Enforcement

What Is Changing

The most critical compliance deadline for most enterprises is 2 August 2026, when requirements for high-risk AI systems under the EU AI Act become enforceable. This includes AI used in employment decisions, credit scoring, education, law enforcement, and critical infrastructure. The EU AI Act — Regulation (EU) 2024/1689 — is the world’s first comprehensive legal framework for AI, and it establishes obligations based on a risk-based classification system that directly affects how custom AI systems must be designed, documented, and maintained.

The Timeline That Matters

The EU AI Act entered into force in August 2024, with a phased implementation timeline:

- February 2025: Prohibited AI practices became enforceable — including social scoring, exploitative subliminal techniques, and untargeted facial recognition scraping.

- August 2025: General-purpose AI model obligations took effect, along with the establishment of the EU AI Office and national competent authorities.

- August 2026: The comprehensive compliance framework for high-risk AI systems becomes enforceable. This is the deadline that affects most enterprise custom AI deployments.

- August 2027: The remaining provisions become fully applicable, including requirements for AI systems embedded in regulated products.

The European Commission proposed a “Digital Omnibus” package in late 2025 that could postpone high-risk obligations to December 2027, but prudent compliance planning treats August 2026 as the binding deadline. Organisations that wait for potential delays risk non-compliance if the extension does not materialise. The Commission already missed its February 2026 deadline for providing guidance on Article 6 high-risk classification, creating uncertainty — but uncertainty about guidance does not change the legal obligation to comply.

What This Means for Custom AI Projects

The EU AI Act creates four categories of practical impact on custom AI development:

Risk classification as a design input. Every custom AI project must now begin with a risk classification assessment. Is the system high-risk under Annex III (employment, credit, education, critical infrastructure)? If yes, the design must incorporate risk management systems, data governance measures, technical documentation, human oversight mechanisms, and post-market monitoring — all from the initial design phase, not added retrospectively. Section 5’s Data-to-Done methodology already incorporates this as a Phase 1 deliverable.

Documentation as a deliverable. High-risk AI systems require comprehensive technical documentation covering the system’s intended purpose, design choices, training data characteristics, performance metrics, limitations, and risk mitigation measures. This documentation must be maintained throughout the system’s lifecycle. For custom AI projects, this means documentation is not optional — it is a regulatory requirement. The cost of documentation should be included in every project budget (see Section 7’s Total Cost of Ownership framework).

Transparency and human oversight. Deployers of high-risk AI systems must ensure that users understand the system’s capabilities and limitations, and that human oversight mechanisms are in place to intervene when the system produces unexpected or harmful results. For AI-native workflows (Trend 3), this means designing explicit escalation paths and human-in-the-loop checkpoints at decision points with material consequences.

Conformity assessment. Before placing a high-risk AI system on the EU market, providers must complete conformity assessments, finalise technical documentation, affix CE marking, and register in the EU database. Custom AI partners must be familiar with these requirements and build them into the project lifecycle.

The Benelux Dimension

Companies operating in the Netherlands and Belgium sit at the intersection of multiple regulatory frameworks: the EU AI Act, AVG/GDPR, sector-specific regulations (DNB/AFM for financial services, IGJ for healthcare), and national implementation legislation. As discussed in Section 8, a custom AI partner must demonstrate familiarity with these overlapping frameworks and how they affect AI system design. The regulatory trend between 2026 and 2030 is toward more specific guidance, more active enforcement, and higher penalties for non-compliance. Building compliance into AI system design from day one is not gold-plating — it is risk management.

Trend 5: AI as Infrastructure — Embedded, Invisible, Continuous

What Is Changing

The final trend is perhaps the most transformative: AI is transitioning from a visible technology that organisations “adopt” to invisible infrastructure that is embedded into every business system and process. Just as companies no longer “adopt” electricity or the internet — these are simply the infrastructure on which business operates — AI is moving toward the same status. By 2030, the question will not be “do you use AI?” but “how deeply is AI embedded in your operations?”

Gartner predicts that by 2028, 33% of enterprise software applications will include agentic AI, enabling 15% of day-to-day work decisions to be made autonomously. By 2030, 50% of cross-functional supply chain management solutions will use intelligent agents to autonomously execute decisions. These are not predictions about standalone AI projects — they are predictions about AI becoming a native component of the software and systems that businesses already use.

What AI-as-Infrastructure Looks Like

In 2025, AI-as-infrastructure means: your ERP system includes demand forecasting, your CRM includes customer intent prediction, your email platform includes intelligent routing, your security system includes anomaly detection. You do not think of these as “AI projects” — they are capabilities embedded in the tools you use daily.

By 2028–2030, AI-as-infrastructure will extend further: your procurement system autonomously negotiates with suppliers within defined parameters, your quality management system adjusts production settings in real-time based on incoming material variation, your financial planning system continuously recalibrates forecasts based on market signals, and your customer service system resolves 70–80% of inquiries without human intervention. The AI is invisible — the business outcome is not.

The Strategic Implication for Custom AI

The AI-as-infrastructure trend creates a strategic calculus for custom AI investment. Off-the-shelf AI capabilities — the ones embedded in commercial software — will handle generic use cases competently. They will provide adequate demand forecasting, acceptable customer service automation, and standard document processing. These capabilities will become table stakes: every competitor will have them, because they come built into the software platforms everyone uses.

Competitive differentiation will come from custom AI that addresses the specific operational challenges unique to your business, your industry, and your market position. A logistics company’s competitive advantage will not come from generic route optimisation (available in every fleet management platform) but from custom AI that understands their specific customer delivery preferences, vehicle fleet characteristics, and seasonal demand patterns. A manufacturing company’s advantage will not come from standard predictive maintenance (embedded in modern SCADA systems) but from custom AI that optimises their specific production process with their specific equipment and their specific quality requirements.

This is the strategic argument for custom AI as a long-term investment: as generic AI capabilities become commoditised through AI-as-infrastructure, the value of AI that is trained on your proprietary data, optimised for your specific workflows, and designed for your competitive context increases — precisely because it cannot be replicated by competitors who buy the same commercial software you use.

Five Trends at a Glance: The 2026–2030 Landscape

| Trend | 2026 State | 2030 Projection | Mid-Market Impact |

| Agentic AI | 40% of enterprise apps embed AI agents | $52.6B market, multi-agent ecosystems | Design custom AI with orchestration in mind |

| Small Language Models | 10–30× cheaper than LLMs | Default for domain-specific tasks | On-premise, privacy-preserving, cost-effective |

| AI-Native Workflows | 2× ROI vs. retrofitted AI | Standard design methodology | 50–80% efficiency gains vs. 10–25% from retrofit |

| EU AI Act Enforcement | High-risk obligations enforceable August 2026 | Full enforcement, active penalties | Compliance built into design, not added later |

| AI as Infrastructure | 33% of enterprise apps include AI by 2028 | AI embedded, invisible, continuous | Custom AI for competitive differentiation |

What These Trends Mean for Your Next AI Investment

The five trends converge on a single strategic conclusion: the organisations that invest in custom AI with disciplined methodology today will be structurally advantaged by 2028–2030. The organisations that wait will face higher costs, greater competitive disadvantage, and a shrinking pool of available AI talent and partnership capacity.

Here is how to translate these trends into investment decisions:

- Build for orchestration, not isolation. Every custom AI component should be designed as a potential building block in a larger agentic system. A standalone demand forecasting model is valuable. A demand forecasting model that can communicate with inventory management, supplier ordering, and financial planning agents is transformative.

- Evaluate SLMs before defaulting to large models. For domain-specific, privacy-sensitive, high-volume applications — which describes most mid-market use cases — SLMs offer better cost-performance ratios and stronger data sovereignty. Start with the smallest model that solves the problem.

- Design AI-native workflows, not AI-augmented processes. The highest-ROI AI investments redesign workflows from the ground up rather than inserting AI into existing processes. Section 5’s Data-to-Done methodology supports this by requiring workflow analysis before technology selection.

- Build compliance into design from day one. The EU AI Act enforcement timeline is not a future concern — it is a current design requirement. Risk classification, documentation, transparency, and human oversight should be project deliverables, not afterthoughts. The cost of building compliance in is 10–20% of project budget; the cost of retrofitting compliance is 50–100%.

- Invest in proprietary AI where generic AI commoditises. As AI-as-infrastructure makes generic capabilities table stakes, competitive differentiation shifts to custom AI trained on your unique data and optimised for your specific operational context. This is the investment that compounds over time.

The Data-to-Done framework described in Section 5 of this series was designed to accommodate these trends. Its phase-gated approach, mandatory data assessment, pilot validation, and scaling readiness gates apply regardless of whether the underlying technology is a traditional ML model, a fine-tuned SLM, or an orchestrated multi-agent system. The methodology is technology-agnostic because the governance disciplines that determine success or failure do not change with the technology — they apply to every AI paradigm.

Veelgestelde Vragen

What is agentic AI?

Agentic AI refers to AI systems that can autonomously plan, execute, and adapt multi-step workflows to achieve defined goals, rather than simply responding to individual prompts. Unlike traditional AI assistants that answer questions, agentic AI systems understand objectives, determine the steps required, execute across multiple systems, evaluate results, and adjust their approach — all within predefined boundaries and with human oversight for high-stakes decisions. Gartner predicts 40% of enterprise applications will embed AI agents by end of 2026.

How will the EU AI Act affect my business?

If your AI systems make or significantly influence decisions about employment, creditworthiness, education, or access to essential services, they likely fall under the high-risk classification and must comply with requirements for risk management, data governance, technical documentation, transparency, and human oversight by 2 August 2026. Even if your systems are not high-risk, general transparency obligations apply to all AI systems that interact with people. The practical impact: every new custom AI project should include risk classification and compliance planning from Phase 1.

What are small language models and why do they matter?

Small language models (SLMs) are compact AI models with 1–14 billion parameters, designed for domain-specific tasks rather than general-purpose capabilities. They matter because they offer 10–30× lower operating costs than large language models, can run on-premise for data sovereignty compliance, and often achieve higher accuracy on specialised tasks through domain-specific fine-tuning. For mid-market companies with privacy-sensitive data and defined use cases, SLMs frequently represent the optimal technology choice.

Should I wait for AI technology to mature before investing?

No. The governance disciplines that determine AI success — business problem definition, data readiness, change management, IP ownership — do not change as technology matures. Investing in these disciplines now builds the organisational AI maturity that enables you to adopt more advanced capabilities (agentic AI, SLMs, AI-native workflows) faster and more effectively as they mature. Companies that wait are not avoiding risk — they are accumulating competitive disadvantage while their more disciplined competitors build AI maturity.

How do I prepare my organisation for agentic AI?

Start with today’s proven technology — a well-defined custom AI solution solving a specific business problem — but design it with future orchestration in mind. Ensure your data pipelines are robust, your APIs are well-documented, and your AI components are modular. When agentic AI frameworks mature, organisations with clean data, documented systems, and operational AI experience will be able to deploy agents weeks rather than months ahead of those starting from scratch.

What AI investments will still be valuable in 2030?

Three categories of AI investment have durable value: proprietary data assets (clean, structured, well-governed data pipelines trained on your operational data), organisational AI maturity (teams experienced in deploying, maintaining, and iterating on AI systems), and custom AI models trained on domain-specific data that competitors cannot replicate. Technology choices may evolve, but these foundational investments compound in value regardless of which specific models or frameworks emerge.

Key Takeaways

- Agentic AI agents market: $7.84B in 2025 to $52.62B by 2030 — the shift from prompt-response to autonomous workflow execution is the defining enterprise AI trend.

- Small language models offer 10–30× cost reduction over LLMs for domain-specific tasks, with on-premise deployment for data sovereignty compliance.

- AI-native workflows (designed around AI) deliver 50–80% efficiency gains vs. 10–25% from AI-retrofitted processes.

- EU AI Act high-risk obligations become enforceable 2 August 2026 — compliance must be built into AI system design from day one.

- As generic AI becomes infrastructure, competitive differentiation shifts to custom AI trained on proprietary data and optimised for specific operational contexts.

- The Data-to-Done methodology (Section 5) is technology-agnostic: the governance disciplines that determine success apply to every AI paradigm, from traditional ML to agentic systems.

Sources

1. Gartner — 40% of Enterprise Apps Will Feature AI Agents by 2026. gartner.com

2. Gartner — Over 40% of Agentic AI Projects Will Be Cancelled by End of 2027. gartner.com

3. Gartner — Guardian Agents Will Capture 10–15% of Agentic AI Market by 2030. gartner.com

4. MarketsandMarkets — AI Agents Market Size: $7.84B to $52.62B (2025–2030). marketsandmarkets.com

5. MachineLearningMastery — 7 Agentic AI Trends to Watch in 2026. machinelearningmastery.com

6. Salesmate — AI Agent Trends for 2026: 7 Shifts to Watch. salesmate.io

7. OneReach — Agentic AI Stats 2026: Adoption Rates, ROI, and Market Trends. onereach.ai

8. Iterathon — Small Language Models 2026: Cut AI Costs 75%. iterathon.tech

9. TechBullion — The Small Language Model Revolution: AI on the Edge, Feb 2026. techbullion.com

10. SecurePrivacy — EU AI Act 2026 Compliance Guide. secureprivacy.ai

11. LegalNodes — EU AI Act 2026 Updates: Compliance Requirements and Business Risks. legalnodes.com

12. IAPP — European Commission Misses Deadline for AI Act Guidance on High-Risk Systems. iapp.org

13. RAND Corporation — Root Causes of Failure for AI Projects, 2024. rand.org

14. BCG — AI Adoption 2024: 74% Struggle. bcg.com15. WorkOS / S&P Global — Why Most Enterprise AI Projects Fail, July 2025. workos.com