The right AI partner demonstrates domain expertise in your industry, has a proven methodology from pilot to production, provides transparent pricing, retains your IP ownership, and can show verifiable case studies with measurable outcomes — not just impressive demos. With vendor-led implementations succeeding at twice the rate of internal builds, partner selection is the single highest-leverage decision in your AI investment. This article gives you a weighted evaluation framework, the red flags that predict failure, and the exact questions to ask before signing.

Why Partner Selection Is the Highest-Leverage Decision

The difference between AI success and failure is not the algorithm — it is the implementation partner. Choosing the right partner is more impactful than choosing the right technology, the right use case, or even the right budget.

MIT’s Project NANDA found that vendor-led AI implementations succeed at a 67% rate compared to just 33% for internal builds. This 2×1 success differential is not about the vendor having better technology — it is about having better methodology. Experienced AI partners have encountered and solved the data quality problems, integration challenges, and change management resistance that derail first-time projects. They have built the project governance structures that prevent scope creep, the validation frameworks that catch model drift, and the deployment patterns that move AI from pilot to production without the 18-month delays that characterise enterprise attempts.

Yet despite this evidence, most companies spend more time evaluating CRM software (€500/month) than they spend evaluating AI partners (€50,000–€200,000 investment). The evaluation often consists of comparing proposal prices and watching demo presentations — neither of which predicts implementation success. 74% of companies struggle to extract value from AI investments, and a significant share of that failure originates at the partner selection stage, not at the technology selection stage.

This article provides a structured, weighted evaluation framework that replaces subjective impression with data-driven assessment. Use it to evaluate any AI partner — including us. The criteria are universal, the questions are specific, and the red flags are drawn from patterns observed across hundreds of failed AI engagements documented in the industry research.

The Eight Evaluation Criteria: A Weighted Scoring Framework

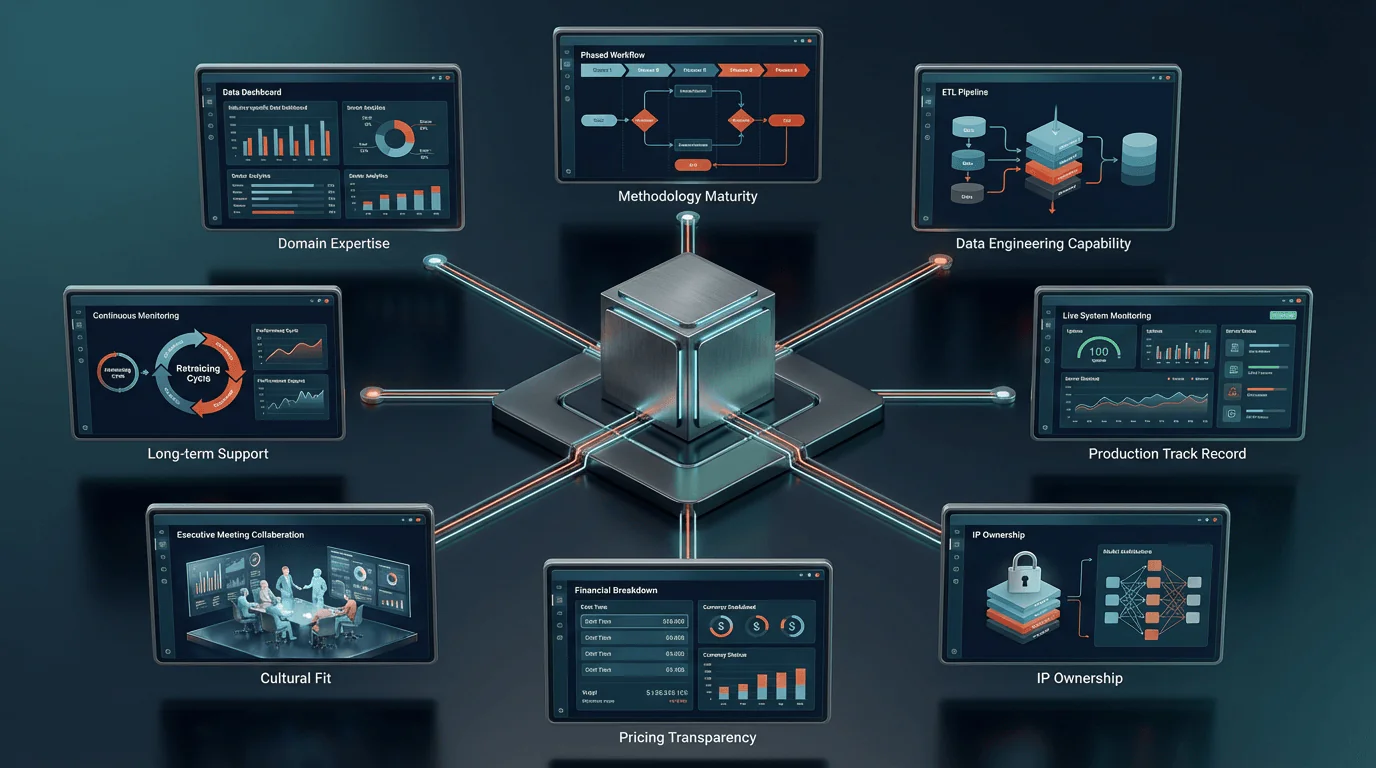

Not all evaluation criteria carry equal weight. Domain expertise and methodology maturity together account for 45% of the score because they are the strongest predictors of implementation success. Pricing transparency and IP ownership, while commercially important, are qualifying factors rather than differentiators.

| # | Criterion | Weight | What It Predicts |

| 1 | Domain Expertise | 25% | Whether the partner understands your industry’s data, regulations, and operational reality |

| 2 | Methodology Maturity | 20% | Whether the partner has a repeatable, phase-gated process from discovery to production |

| 3 | Data Engineering Capability | 15% | Whether the partner can handle messy, real-world data — not just clean datasets |

| 4 | Production Deployment Track Record | 10% | Whether the partner’s AI systems actually run in production, not just in pilot |

| 5 | IP Ownership & Data Rights | 10% | Whether you retain ownership of the model, data pipelines, and trained weights |

| 6 | Pricing Transparency | 8% | Whether all costs are disclosed upfront, including maintenance, infrastructure, and retraining |

| 7 | Cultural Fit & Communication | 7% | Whether the partner communicates in business outcomes, not just technical jargon |

| 8 | Long-Term Support Model | 5% | Whether the partner offers structured post-deployment support, not just handover |

| Total | 100% |

Criterion 1: Domain Expertise (25%)

Domain expertise is the single most important differentiator because it determines whether the partner can translate your business problem into a technical solution that actually works in your operational context. A partner with generic AI skills but no experience in your sector will spend weeks understanding your data structures, industry terminology, regulatory requirements, and operational constraints — all of which an experienced partner already knows.

Domain expertise manifests in three verifiable ways: the partner can describe the typical data architecture in your sector without being told, the partner understands sector-specific regulations and how they constrain AI design (e.g., EU AI Act high-risk classifications for financial services and healthcare), and the partner can reference specific metrics that matter in your industry (not generic KPIs, but sector-specific outcomes like forecast accuracy for logistics, OEE for manufacturing, or claim processing time for insurance).

How to verify: Ask the partner to describe the three most common data challenges in your industry. An experienced partner will name them immediately. Ask for two case studies in your sector with quantified outcomes. Generic “we helped a company improve efficiency” is insufficient — demand specific metrics, timelines, and the business problem that was solved.

Criterion 2: Methodology Maturity (20%)

A mature methodology is the structural difference between a project that delivers on time and on budget, and a project that drifts into endless iteration. The methodology should be documented, repeatable, and phase-gated — meaning each phase has defined deliverables, success criteria, and a decision gate before proceeding to the next phase.

As discussed in Section 5 of this series, a comprehensive AI implementation methodology includes distinct phases for problem definition, data assessment, model development, integration, validation, deployment, and post-deployment optimisation. Each phase should have a documented timeline, defined deliverables, and clear go/no-go criteria. The absence of decision gates is the primary structural cause of scope creep and budget overruns in AI projects.

Effective vendor selection frameworks recommend weighting implementation track record at 20% of the total evaluation. This is not about process documentation for its own sake — it is about whether the partner has a system that prevents the predictable failure modes: scope expansion without budget adjustment, model development on insufficiently prepared data, deployment without adequate testing, and handover without structured support.

How to verify: Request the partner’s methodology documentation. A mature partner will provide a phase-by-phase overview with deliverables and timelines without hesitation. Ask what happens if the Phase 2 data audit reveals that data quality is insufficient. The answer should involve a structured remediation plan with cost and timeline impact — not “we’ll figure it out.”

Criterion 3: Data Engineering Capability (15%)

The most undervalued capability in AI partner evaluation is data engineering. 70% of AI project failures originate from data readiness issues, yet most evaluation processes focus almost exclusively on model development (the algorithm) rather than data engineering (the pipeline that feeds the algorithm). A partner with excellent model development skills but weak data engineering capability will build an impressive model that fails in production because the data pipeline is fragile, slow, or inaccurate.

Data engineering capability includes: ETL pipeline design and automation (extracting data from multiple source systems, transforming it into model-ready format, and loading it into the training and inference environments), data quality monitoring (automated detection of missing values, format changes, and distribution shifts), feature engineering (transforming raw data into the derived variables that models actually learn from), and data versioning (tracking which data was used to train which model version, essential for debugging and compliance).

How to verify: Ask the partner to describe their approach to a scenario where your ERP exports data in three different formats across two legacy systems. A strong partner will describe their ETL approach, data quality gates, and how they handle schema changes. A weak partner will focus on the model and treat data preparation as a minor preliminary step.

Criterion 4: Production Deployment Track Record (10%)

88% of AI pilots never reach production. This statistic means that the majority of AI vendors have experience building pilots but not deploying production systems. The gap between a working pilot and a production system is enormous: production requires monitoring infrastructure, error handling, performance optimisation under real-world load, security hardening, user access management, and integration with live business workflows. A partner whose portfolio consists primarily of proof-of-concept projects may lack the operational engineering skills to bridge this gap.

How to verify: Ask how many of their completed projects are currently running in production. Ask for the longest-running production system and its current performance metrics. Ask what their monitoring and alerting infrastructure looks like for deployed models. A partner with genuine production experience will describe drift detection, automated retraining triggers, and SLA monitoring. A partner with only pilot experience will describe accuracy metrics from the training phase.

Criterion 5: IP Ownership and Data Rights (10%)

This is the criterion most likely to create long-term strategic damage if handled poorly. Stanford Law School research based on TermScout data found that 92% of AI vendor contracts claim broad data usage rights, far exceeding the market average of 63%. This means the majority of AI vendors, by default, claim rights to use your data for purposes beyond your project — potentially including training models for your competitors.

The IP ownership discussion must cover four distinct assets: your input data (should remain 100% yours, with no vendor training rights), the data pipeline (the ETL logic, feature engineering code, and automation — should transfer to you), the trained model (the model weights resulting from training on your data — should be yours), and the underlying framework (the partner’s pre-existing code libraries and tools — typically licensed, not transferred, which is reasonable). A partner who conflates these four categories or who resists specifying ownership for each is a significant risk.

How to verify: Request the partner’s standard IP clause before the proposal stage. If the contract grants the partner rights to use your data for “service improvement,” “product development,” or “model training,” negotiate explicitly for a “no training, no commingling, no retention” clause. A reputable vendor should agree to most core IP provisions — refusal to do so is itself important information about how they view the partnership.

Criterion 6: Pricing Transparency (8%)

As detailed in Section 7 of this series, the hidden costs in AI projects — data remediation, infrastructure, scope expansion, model drift, and maintenance — can exceed the visible development cost. Pricing transparency means the proposal includes all cost components: development, data preparation, infrastructure, integration, change management, testing, and post-deployment maintenance. It also means the pricing model is clearly structured — whether fixed-price, time-and-materials, or milestone-based — with defined scope boundaries for each.

How to verify: Apply the Cost Transparency Checklist from Section 7: does the proposal include total project cost, data preparation estimates, infrastructure costs, integration costs, change management budget, maintenance costs, retraining frequency and cost, and a clear IP ownership clause? Any missing item should be requested before evaluation. The partner’s willingness to provide full transparency is itself an evaluation data point.

Criterion 7: Cultural Fit and Communication (7%)

AI projects require intensive collaboration between the partner’s technical team and the client’s business stakeholders. If the partner communicates primarily in technical jargon (precision, recall, F1 scores, gradient descent) without translating these concepts into business impact (what does 93% accuracy mean for our operations?), the collaboration will break down and stakeholder confidence will erode.

Cultural and strategic fit may be weighted at only 5–7% in formal evaluation frameworks, but misaligned vendor relationships cause a disproportionate share of enterprise contract terminations. The partner should demonstrate the ability to present technical work to non-technical stakeholders, respond to business questions with business answers, and adapt communication frequency and format to the client’s preferences.

How to verify: In the evaluation meeting, ask the partner to explain a recent project’s outcome to a non-technical audience. If they default to technical metrics without connecting them to business results, the communication gap will persist throughout the project. Also assess responsiveness during the sales process — if they are slow to respond before they have your money, they will not improve after.

Criterion 8: Long-Term Support Model (5%)

AI systems require ongoing maintenance, monitoring, and optimisation (Section 7: the 100/25/25 rule). The partner’s support model determines whether your AI system improves over time or degrades. A partner whose engagement model is “build and handover” leaves you with a system that will degrade within 12–18 months as data patterns shift and model accuracy declines.

A strong support model includes defined SLAs for response time and issue resolution, scheduled model performance reviews (quarterly or semi-annual), proactive drift detection and retraining recommendations, a clear escalation path for performance degradation, and transparent maintenance pricing agreed before the project starts. The support model should be discussed and agreed during the evaluation phase, not as an afterthought after deployment.

How to verify: Ask what the partner’s standard post-deployment support package includes, how long it lasts, and what it costs. Ask what happens when model accuracy drops below the agreed threshold. A strong partner will describe their monitoring infrastructure, retraining process, and SLA terms. A weak partner will describe “ad hoc support as needed.”

The Nine Red Flags That Predict Failure

These warning signs, observed during the evaluation and proposal phase, correlate strongly with project failure. Any single red flag warrants caution. Three or more warrant disqualification.

1. Demo-Driven Sales Without Business Case Discussion

If the partner’s evaluation meeting centres on demonstrating their technology (impressive dashboards, real-time visualisations, model accuracy charts) without first understanding your business problem, revenue impact, and success criteria, they are selling technology rather than solving problems. The most impressive demo in the world is worthless if it solves the wrong problem.

2. Vague or Missing Methodology Documentation

A partner who cannot provide a written methodology with defined phases, deliverables, and decision gates either has no methodology (high risk) or has a methodology they are unwilling to share (trust concern). In either case, the project will lack the structural governance that prevents scope creep and budget overruns.

3. Resistance to IP Ownership Discussion

If the partner deflects or delays the IP ownership conversation (“we’ll sort that out in the contract phase”), they likely have standard terms that favour the vendor. 92% of AI vendor contracts claim broad data usage rights — the IP discussion should happen before the proposal, not after.

4. No Production References

A partner whose references are all pilot or proof-of-concept projects has not demonstrated the ability to deploy and maintain AI in a live business environment. Pilots are controlled experiments; production is messy reality.

5. Single-Technology Approach

A partner who recommends the same technology stack for every client and every problem is fitting your problem to their solution rather than designing a solution for your problem. The right technology depends on your data, your integration requirements, and your compliance context.

6. Unrealistic Timeline Promises

If a partner promises a fully deployed, production-ready AI system in four weeks for a multi-system integration project, the timeline is not ambitious — it is unrealistic. Unrealistic timelines lead to corners being cut on data preparation, testing, and change management, which are precisely the activities that determine whether the system works in production.

7. No Data Assessment Phase

A partner who proposes to begin model development immediately, without a dedicated data assessment phase, is skipping the step that determines whether the project can succeed. 43% of companies cite data quality as their top AI obstacle. Discovering data quality problems during model development is the most expensive form of rework.

8. Pricing That Excludes Maintenance

A proposal that quotes only development cost without addressing Year 2+ maintenance is either naive or deliberately incomplete. As Section 7 documents, development is 40–50% of three-year total cost of ownership. A partner who does not include maintenance planning in the proposal does not understand or does not want you to understand the full investment.

9. Inability to Explain Failures

Every experienced AI partner has had projects that did not go as planned. If a partner claims a 100% success rate or cannot describe a project that encountered significant challenges and how they addressed those challenges, they are either too inexperienced to have encountered failure or too dishonest to acknowledge it. Both are disqualifying.

The Twenty Questions to Ask Before Signing

These questions are designed to reveal the partner’s actual capabilities and working practices, not their marketing claims. Ask them in the evaluation meeting and assess the specificity and confidence of the responses.

Strategy and Approach

- 1. How would you define the business problem we’re trying to solve, and what metric would you use to measure success?

- 2. What is your methodology for determining whether custom AI is the right approach for this problem, vs. off-the-shelf or fine-tuning?

- 3. Describe a project where you recommended the client not proceed with AI. What was the reasoning?

Data and Technical

- 4. What does your data assessment phase look like, and what happens if data quality is below your threshold?

- 5. How do you handle integration with legacy systems that lack modern APIs?

- 6. What is your approach to model validation and bias testing?

- 7. How do you monitor model performance in production, and what triggers a retraining cycle?

Delivery and Track Record

- 8. How many of your completed projects are currently running in production, and for how long?

- 9. Can you provide a reference from a client in our industry with a similar use case?

- 10. Describe a project that did not go as planned. What went wrong and what did you learn?

- 11. What is your average timeline from project kickoff to production deployment?

Commercial and Legal

- 12. Who owns the trained model, the data pipeline, and the model weights after the project is complete?

- 13. Does your standard contract include any rights to use our data for purposes beyond this project?

- 14. What is included in the proposal cost, and what costs are excluded (infrastructure, retraining, maintenance)?

- 15. What is your pricing model: fixed-price, time-and-materials, or milestone-based?

Support and Continuity

- 16. What does your post-deployment support model include, and what does it cost?

- 17. What happens if the model’s accuracy drops below the agreed threshold in production?

- 18. How do you handle knowledge transfer to our internal team?

- 19. What is your approach to EU AI Act compliance for systems that may be classified as high-risk?

- 20. If we decide to change partners after deployment, what do we retain and what is the transition process?

A strong partner will answer all twenty questions with specificity and confidence. Evasion, deflection, or generic responses to any of these questions should be weighted as a negative signal in the evaluation.

Structuring Your RFP for Comparable Responses

An unstructured RFP produces incomparable responses. A structured RFP forces vendors to address the same criteria in the same format, enabling weighted scoring that reveals the best partner — not just the best writer.

Your AI project RFP should include these seven sections, each with specific instructions for the respondent:

- Section 1: Company and Team. Firm background, team composition for this project (named individuals and their relevant experience), and three comparable project references with client contact information.

- Section 2: Problem Understanding. The respondent’s understanding of your business problem, proposed success metric, and preliminary assessment of feasibility based on the information provided.

- Section 3: Methodology and Timeline. Phase-by-phase approach with deliverables, timelines, decision gates, and resource allocation per phase. Explicit statement of what triggers a scope or timeline change.

- Section 4: Technical Approach. Proposed technology stack, model architecture, data engineering approach, integration plan, and testing/validation methodology. Rationale for technology choices.

- Section 5: Commercial Terms. Total project cost broken down by phase, annual maintenance cost estimate, infrastructure cost estimate, retraining cost and frequency, and pricing model (fixed/T&M/milestone).

- Section 6: IP and Data Rights. Explicit statement of IP ownership for each asset category: client data, data pipeline, trained model, and partner’s pre-existing framework. Data usage restrictions and deletion terms.

- Section 7: Post-Deployment Support. Support model description, SLA terms, monitoring and maintenance approach, knowledge transfer plan, and transition/exit provisions.

Score each section using the weighted criteria from the evaluation framework. Require all respondents to present their proposal to the same stakeholder group, and include a live Q&A session where the twenty evaluation questions can be asked. The partner’s ability to answer unscripted questions is often more revealing than the written proposal.

Benelux-Specific Considerations

Choosing an AI partner in the Benelux region introduces specific considerations around language, regulation, and market context that influence evaluation and project success.

Regulatory familiarity. The Netherlands and Belgium sit at the intersection of multiple regulatory frameworks: the EU AI Act, AVG/GDPR, sector-specific regulations (DNB/AFM for financial services, IGJ for healthcare), and Dutch tax incentives for R&D innovation. A partner operating in the Benelux should demonstrate familiarity with these frameworks and how they affect AI design choices — particularly the EU AI Act’s distinction between limited-risk and high-risk AI systems, which determines documentation, transparency, and human oversight requirements.

Multilingual capability. Benelux operations frequently involve Dutch, French, German, and English — sometimes within a single business process. Custom AI solutions that process text (customer communications, documents, product descriptions) require NLP models that handle multilingual input accurately. A partner should demonstrate experience with multilingual NLP, including the regional language variations between Netherlands Dutch and Belgian Dutch, and between French and Belgian French.

SME-oriented approach. The Benelux mid-market is characterised by companies with €2–50M revenue, 20–500 employees, and pragmatic decision-making cultures. These companies need partners who can work within realistic budgets (€25K–€150K, not €500K+), deliver tangible results within 12–20 weeks (not 12–18 months), and communicate in business outcomes rather than academic research terms. An AI partner whose typical engagement is enterprise-scale (€500K+, 12+ months) may not be calibrated for Benelux mid-market execution speed and budget expectations.

Proximity and accessibility. For companies where the AI system touches core operations, the ability to have face-to-face meetings during critical project phases (kickoff, data assessment results, deployment readiness review) adds significant value. A partner based in the Benelux or with a local presence offers faster communication cycles and eliminates timezone-related delays during critical phases.

The Partner Evaluation Scorecard

Use this scorecard to evaluate each potential partner on a 1–5 scale across the eight weighted criteria. Score based on evidence, not impression.

| # | Criterion | Weight | Partner A | Partner B | Partner C | Max |

| 1 | Domain Expertise | 25% | __ / 5 | __ / 5 | __ / 5 | 1.25 |

| 2 | Methodology Maturity | 20% | __ / 5 | __ / 5 | __ / 5 | 1.00 |

| 3 | Data Engineering | 15% | __ / 5 | __ / 5 | __ / 5 | 0.75 |

| 4 | Production Track Record | 10% | __ / 5 | __ / 5 | __ / 5 | 0.50 |

| 5 | IP & Data Rights | 10% | __ / 5 | __ / 5 | __ / 5 | 0.50 |

| 6 | Pricing Transparency | 8% | __ / 5 | __ / 5 | __ / 5 | 0.40 |

| 7 | Cultural Fit | 7% | __ / 5 | __ / 5 | __ / 5 | 0.35 |

| 8 | Long-Term Support | 5% | __ / 5 | __ / 5 | __ / 5 | 0.25 |

| Weighted Total | 100% | __ / 5.00 | __ / 5.00 | __ / 5.00 | 5.00 |

Scoring guide: 1 = No evidence or clear weakness. 2 = Limited evidence, concerns. 3 = Adequate, meets minimum threshold. 4 = Strong, exceeds expectations. 5 = Exceptional, best-in-class evidence. Multiply each score by the weight to produce the weighted score. Sum weighted scores for each partner. The highest weighted total represents the best-evidenced partner — not necessarily the cheapest or the most technically impressive in demos.

Veelgestelde Vragen

What questions should I ask an AI vendor?

Focus on twenty questions covering four areas: strategy and approach (how they define the problem and measure success), data and technical (data assessment, integration, monitoring approach), delivery track record (production references, timeline reality, failure examples), and commercial/legal (IP ownership, total cost, maintenance model). The partner’s specificity and confidence in responding reveals more than their marketing materials.

How do I evaluate AI consulting firms?

Use a weighted scoring framework with eight criteria: domain expertise (25%), methodology maturity (20%), data engineering (15%), production track record (10%), IP ownership (10%), pricing transparency (8%), cultural fit (7%), and long-term support (5%). Score each partner 1–5 based on verifiable evidence, multiply by weight, and compare totals. Supplement with reference checks from clients in your sector.

What is the biggest risk in choosing an AI partner?

The biggest risk is selecting a partner based on demo impressions or price rather than methodology and track record. 74% of companies struggle to extract AI value, and many failures trace back to partner selection based on presentation quality rather than implementation capability.

Should I choose a large consulting firm or a specialised AI partner?

Specialised AI partners typically offer deeper technical expertise, faster delivery, more competitive pricing, and more direct access to senior practitioners. Large consulting firms offer broader service portfolios and brand-name reassurance but often deliver through junior teams at premium rates. For mid-market companies with €25K–€200K budgets, a specialised partner is typically the better fit.

How important is IP ownership in AI projects?

Critical. 92% of AI vendors claim broad data usage rights by default. If you do not negotiate explicit ownership of your data pipeline, trained model, and model weights, you may find that your competitive advantage is not exclusively yours. The IP discussion should happen before the proposal, not in the contract fine print.

How long should vendor evaluation take?

For a mid-market AI project (€25K–€150K), evaluation should take 2–4 weeks: 1 week for RFP distribution and response, 1–2 weeks for evaluation meetings and reference checks, and 1 week for scoring and decision. Longer evaluation does not improve decision quality — it delays time to value.

What if no partner scores highly on all criteria?

Perfection is not the standard — evidence-based confidence is. Focus on the weighted total: a partner scoring 4+ on domain expertise and methodology maturity with 3 on support is a stronger choice than a partner scoring 3 across all criteria. Prioritise the criteria that predict success (domain expertise and methodology), and negotiate improvements on lower-scoring areas during the contracting phase.

Key Takeaways

- Vendor-led implementations succeed at 2× the rate of internal builds (67% vs. 33%) — partner selection is the highest-leverage decision in your AI investment.

- Use the eight weighted criteria: domain expertise (25%), methodology maturity (20%), data engineering (15%), production track record (10%), IP ownership (10%), pricing transparency (8%), cultural fit (7%), and long-term support (5%).

- Watch for nine red flags: demo-driven sales, vague methodology, IP resistance, no production references, single-technology approach, unrealistic timelines, no data assessment phase, maintenance-free pricing, and inability to discuss failures.

- 92% of AI vendor contracts claim broad data usage rights — negotiate IP ownership explicitly before signing.

- Structure your RFP in seven sections for comparable responses, and score using the weighted evaluation scorecard.

- In the Benelux mid-market, prioritise partners with regulatory familiarity, multilingual capability, SME-oriented delivery speed, and local accessibility.

Sources

1. MIT Project NANDA — The GenAI Divide: State of AI in Business 2025. fortune.com

2. BCG — AI Adoption in 2024: 74% of Companies Struggle. bcg.com

3. Stanford Law School / TermScout — Navigating AI Vendor Contracts, March 2025. law.stanford.edu

4. RTS Labs — Enterprise AI Roadmap: 70% of Failures from Data Issues. rtslabs.com

5. WorkOS — Why Most Enterprise AI Projects Fail. workos.com

6. Arphie — Mastering the Vendor Selection Process 2025. arphie.ai

7. BrianOnAI — Third-Party AI Contract Addendum Template. brianonai.com

8. Netguru — How to Evaluate AI Vendors: A Step-by-Step Guide. netguru.com

9. CTO Magazine — The Great AI Vendor Lock-In. ctomagazine.com10. European Commission — EU AI Act Regulatory Framework. digital-strategy.ec.europa.eu